Keyword [CliqueNet]

Yang Y, Zhong Z, Shen T, et al. Convolutional Neural Networks with Alternately Updated Clique[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 2018: 2413-2422.

1. Overview

In this paper, it proposed CliqueNet

- improving information flow

- both forward and backward connections between any two layers in the same block

- combination of recurrent structure and feedback mechanisim

1.1. Contribution

- CliqueNet

- multi-scale feature strategy

- experiments on five datasets

1.2. Related Work

1.2.1. Network

- Multi-column networks

- Deeply-Fused Nets

- GooLeNet

- (WRN) wide residual networks

- FractalNet

- ResNet

- DenseNet

- (DPN) dual path networks

2. Methods

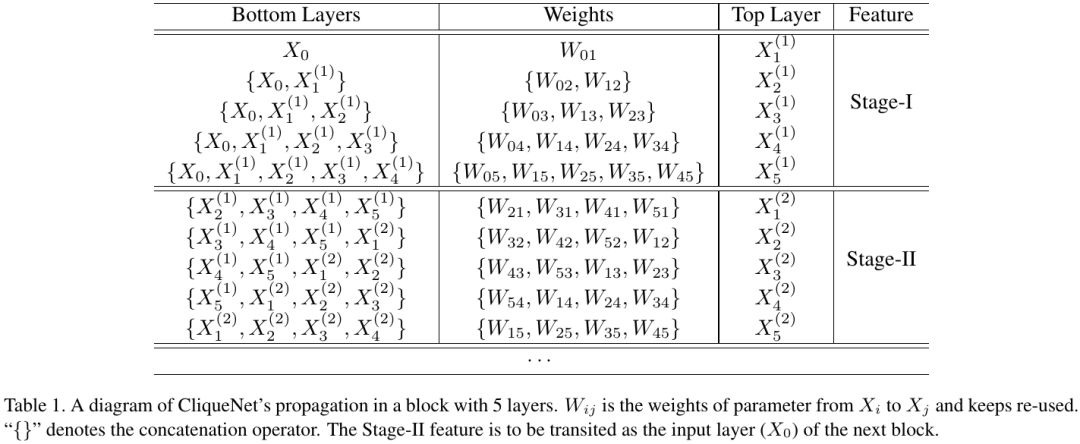

2.1. Clique Block

recurrent feedback structure ensures that the communication is maximized among all layers in the block

while concat feature, the weight matrix of corresponding layers also are concat

2.2. Extra Techniques

2.2.1. Attention Transition

- only add to transition layer

2.2.2. Bottleneck and Compression

- introduce bottleneck to block

- introduce compression to feature of loss function before global pooling

2.3. Implementation

3. Experiments

3.1. Details

- Dropout 0.2 after each Conv

3.2. Comparison

3.3. Stage I vs Stage II